From Vibe Coding to Production: Why AI-Generated Code Still Needs Engineering Discipline

- I Chishti

- 4 days ago

- 9 min read

The developer who uses AI to write faster is an asset. The team that uses AI without discipline is a liability.

"Move fast and break things" is a fine philosophy for a startup MVP. It is a catastrophic one for production software that handles real users, real data, and real money. AI can accelerate your coding speed by 10x — but it cannot replace the engineering discipline that keeps those 10x more lines of code from becoming 10x more problems.

Vibe coding is real, it is exciting, and it is changing how software gets built. Developers who once spent hours wrestling with boilerplate, configuration, and syntax can now describe intent in plain English and get working code back in seconds. Tools like GitHub Copilot, Cursor, Devin, and Amazon Q Developer have made this the default experience for millions of engineers in 2025 and 2026.

But here is what nobody is talking about loudly enough: AI generates code at the speed of thought, and most of that code goes to production. The problem is not the code generation — it is what happens next. Architecture decisions get skipped. Tests get deferred. Security reviews get abbreviated. Technical debt accumulates not over months but over hours. The speed of AI output has outrun the speed of human review, and the gap is dangerous.

This is not an argument against AI-assisted development. It is an argument for the engineering discipline that makes AI-generated code safe to ship. Speed without structure is how you end up with a beautiful prototype that becomes a maintenance nightmare at scale. Let us break down exactly what that discipline looks like, why it matters more in an AI-accelerated world, and how to embed it into your team's daily practice.

The Honest Problem with AI-Accelerated Delivery

Before we talk solutions, let us name the problem precisely — because it is not what most teams think it is.

The problem is not that AI writes bad code. Most of the time, AI writes perfectly adequate code. It follows patterns, it is syntactically correct, it often handles the happy path elegantly. The problem is that "adequate code" and "production-ready code" are not the same thing, and the difference between them has always been engineering discipline: the decisions made around the code, not just in it.

When a developer wrote every line manually, the act of writing was itself a forcing function. You thought about what you were building as you built it. You noticed the edge case when you reached the conditional branch. You reconsidered the data model when the query became awkward. That friction — the kind that slowed you down — was also the kind that caught problems early.

AI removes that friction. That is mostly a good thing. But it also removes the natural checkpoints that discipline was built into. Teams that do not consciously replace those checkpoints end up with codebases that are fast to produce and expensive to maintain.

There is a second, subtler problem: confidence inflation. AI-generated code looks finished. It has docstrings, it has variable names, it is formatted. It does not look like a first draft. Teams that are under deadline pressure — which is every team — are strongly incentivised to treat it as final. The code looks complete. It passed the linter. Ship it.

This is how production incidents are born.

The Five Engineering Disciplines That AI Cannot Skip For You

Here is the discipline framework that production-grade AI-assisted development requires. None of this is new — experienced engineers know these practices. What is new is that the pressure to skip them has never been higher, and the consequences of skipping them have never accumulated faster.

1. Architecture Before Acceleration

AI is an excellent implementer and a poor architect. It will build whatever you ask it to build — but it will not tell you whether you should be building it that way. Architecture decisions — how services communicate, where state lives, how the data model will evolve, how the system will behave under load — these must be made by humans before AI writes a single line.

The discipline here is sequencing. Before you prompt, design. Even a rough Architecture Decision Record (ADR) written in plain English takes thirty minutes and saves thirty hours of refactoring. Teams that skip this step because "we can just ask the AI to refactor later" invariably discover that AI-assisted refactoring of AI-generated code at scale is one of the most painful experiences in modern software engineering.

Specific practice: before any feature involving new services, data models, or integration points, require a written architecture note — not a formal document, just a clear statement of the approach — to be reviewed before AI implementation begins.

2. Tests That Verify Intent, Not Just Behaviour

AI is very good at writing tests. It is also very good at writing tests that pass. These are not the same thing.

The structural problem with AI-generated tests is that they validate what the code does, not what the code is supposed to do. An AI writing tests for its own implementation will write tests that reflect the assumptions baked into that implementation — including the wrong ones. Coverage metrics become actively misleading: you can have 95% test coverage and 0% confidence, because none of those tests are checking the business outcome.

The discipline here is ownership. Human engineers must own the acceptance criteria and the acceptance tests. AI can write unit test scaffolding. AI can generate test cases for edge conditions once the intent is established. But the test that says "a user who has not completed onboarding cannot access paid features" must be written by someone who understands the business rule, not derived from the implementation.

Specific practice: for every user story, a human writes the acceptance test before AI implementation begins. This is not a new idea — it is test-driven development applied to the AI era.

3. Security as a First-Class Gate, Not an Afterthought

AI coding tools are trained predominantly on legitimate code written for legitimate purposes. This makes them structurally weak at adversarial thinking. They do not naturally anticipate SQL injection through a field that will also receive user input three months from now. They do not notice that the API endpoint being built has no rate limiting because nothing in the prompt mentioned rate limiting.

In a world where AI generates more code faster, the attack surface expands at the same rate — unless security review is treated as a mandatory gate, not an optional pass. The good news is that AI security tooling has kept pace: tools like Snyk, Semgrep, and Amazon Q's security scanning can be embedded in the pipeline as automated first-pass reviewers. The bad news is that automated scanning catches known patterns, not novel architectural vulnerabilities. Human security review cannot be replaced.

Specific practice: all AI-generated code touching authentication, authorisation, data persistence, external APIs, or user input must pass both automated security scanning and a brief human security review before merge. This is non-negotiable regardless of time pressure.

4. Code Review That Reviews, Not Rubber-Stamps

The volume of code produced by AI-augmented teams is a code review challenge at a scale most engineering cultures have not encountered before. The natural response — reviewing AI PRs faster because they look cleaner — is the wrong response.

AI-generated code requires a different kind of review than human-generated code. You are not looking for typos or formatting issues. You are looking for coherence: does this code belong in this codebase? Does it duplicate something that already exists? Does it introduce a pattern inconsistent with how we have done things elsewhere? Does it handle failure modes correctly? Does it make implicit assumptions that will break in production?

These questions require a reviewer who understands the system — not just the PR. Assigning AI-generated PRs to reviewers who do not have codebase context, or reviewing AI PRs in the three-minute slots between meetings, produces a false signal: approved PRs that carry hidden risk.

Specific practice: tag all AI-generated PRs explicitly. Route them to reviewers with relevant codebase context. Set a minimum review time expectation — not a rubber stamp, a genuine review. Use AI code review tools (CodeRabbit, Qodo) as a first pass to surface obvious issues, freeing human reviewers to focus on deeper concerns.

5. Technical Debt Accounting, Done Weekly

Technical debt has always accumulated in software projects. AI-accelerated development has changed the rate of accumulation by an order of magnitude. What used to take a quarter to become a crisis can now take a sprint.

The solution is not to slow down AI output — it is to make technical debt visible and deliberate. Every sprint should include a brief debt accounting: what shortcuts were taken, what decisions were deferred, what parts of the codebase are now fragile. This is not a post-mortem activity. It is a weekly hygiene practice.

Specific practice: allocate 10–15% of every sprint to debt remediation as a fixed budget, not a discretionary one. When AI helps you ship three features in the time it used to take to ship one, use a portion of that gain to keep the codebase healthy. This is the engineering equivalent of double-entry bookkeeping — every acceleration entry needs a corresponding maintenance entry.

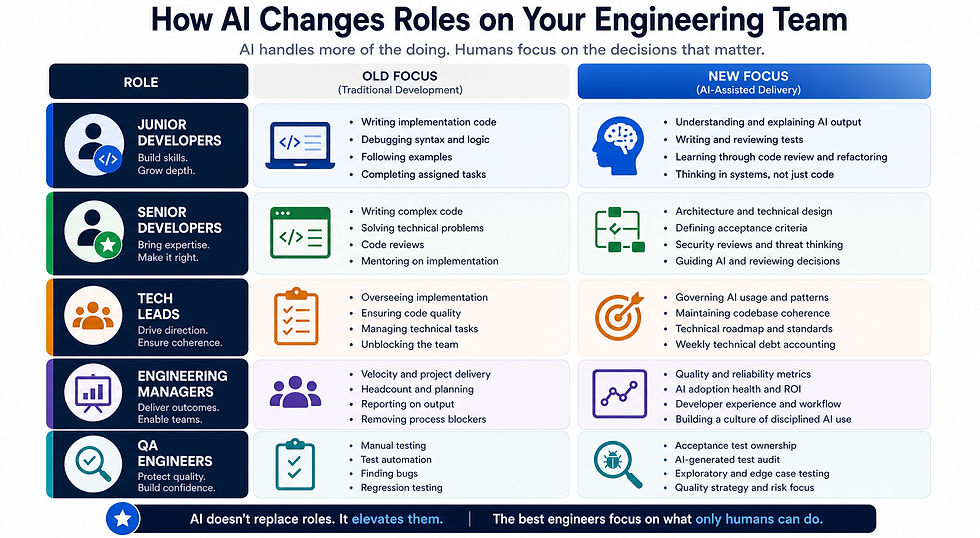

How This Changes Roles on Your Team

Embedding AI discipline is not just about adding process steps — it requires rethinking what different roles are responsible for.

The most important shift is for senior developers and tech leads. If your best engineers are still writing CRUD boilerplate and basic business logic in 2026, you have an adoption gap. They should be defining the architecture AI implements, writing the acceptance tests AI scaffolds around, and reviewing the decisions AI cannot make. AI frees senior engineers to do what only senior engineers can do. That reallocation only happens if it is made deliberate.

What Breaks When You Skip the Discipline

It is easy to argue for discipline in the abstract. It is more useful to be concrete about what goes wrong when it is absent.

The Coherence Collapse. Six developers use AI independently for six weeks on the same codebase. Each AI has slightly different suggestions based on context window differences. Six weeks later, you have six patterns for error handling, four approaches to database access, and three different abstractions for the same concept. None of it is wrong individually. All of it is a nightmare to maintain collectively.

The Test Theatre Problem. A team reports 90% test coverage. Leadership is satisfied. A production incident reveals that the entire payment processing flow had tests that validated the happy path only. The edge case — a partial authorisation during a network timeout — was never tested because neither the AI nor the human thought to specify it.

The Security Blindspot. An AI-generated API endpoint handles file uploads correctly, validates file types, and returns appropriate errors. What it does not do is validate that the authenticated user has permission to upload to the specified directory. No-one noticed during review because the code looked complete. A penetration test three months later finds it in twenty minutes.

The Refactoring Spiral. A prototype built with AI in a weekend to prove a concept gets pushed to production "temporarily." Six months later it handles £2 million in transactions per day and the architecture it was never designed for makes every change risky. The team that was going to fix it properly is now occupied fixing the incidents the architecture causes.

These are not hypothetical scenarios. They are patterns appearing in real post-mortems at real companies in 2025 and 2026.

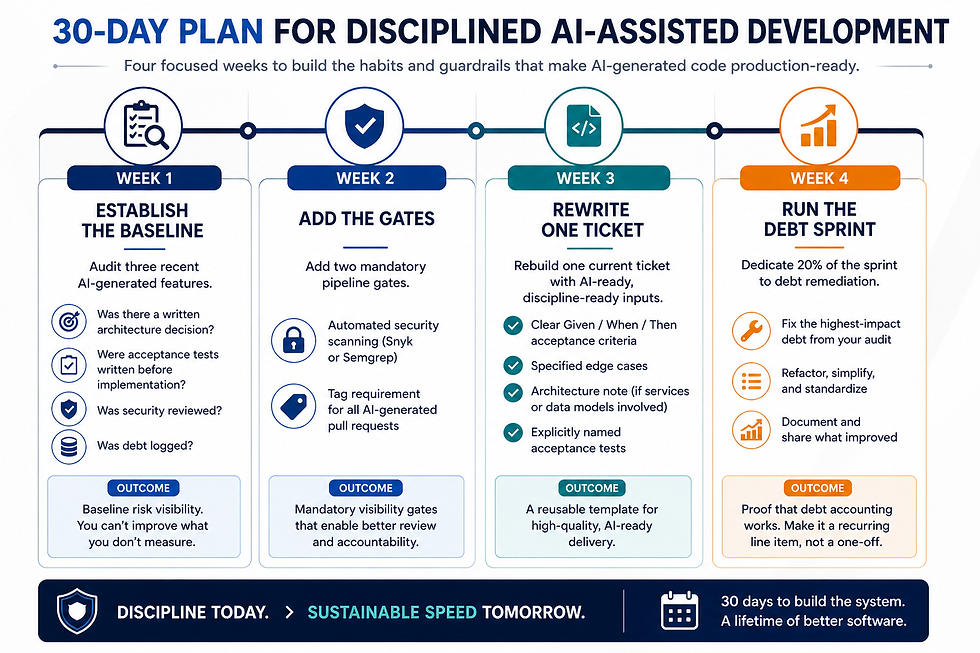

Your 30-Day Discipline Implementation Plan

Week 1 — Establish the Baseline. Audit three recent AI-generated features. For each one, score: was there a written architecture decision? Were acceptance tests written before implementation? Was security reviewed? Was debt logged? Most teams will score poorly. That is the point. You cannot improve what you have not measured.

Week 2 — Add the Gates. Add two mandatory pipeline gates: automated security scanning (Snyk or Semgrep) and a tag requirement for AI-generated PRs. These cost nothing in human time and create the visibility needed for better review practices.

Week 3 — Rewrite One Ticket. Take a current sprint ticket and rebuild its acceptance criteria to be AI-ready and discipline-ready: clear Given/When/Then criteria, specified edge cases, a written architecture note if services or data models are involved, and explicitly named acceptance tests. Use this ticket as the template for all future tickets.

Week 4 — Run the Debt Sprint. Dedicate 20% of the sprint to remediation of the technical debt most exposed by the Week 1 audit. This is not catch-up — it is proof of concept that debt accounting works. Share the results with the team and with leadership. Make it a recurring line item, not a one-off.

The Bottom Line

AI-assisted development is not a phase. It is the new baseline for how software gets built. The teams that will win are not the ones that use AI the most — they are the ones that use AI with the most discipline.

Speed is not the competitive advantage. Sustainable speed is. A team that ships three features a week for fifty weeks beats a team that ships ten features for three weeks and then spends the rest of the year firefighting the consequences.

The engineering practices that make software reliable — architecture thinking, test discipline, security rigour, code review, debt management — were not invented to slow you down. They were invented because building software without them slows you down eventually, at the worst possible moment. AI has not changed that truth. It has made it more urgent.

The question is not whether to apply engineering discipline to AI-generated code. The question is whether to build that discipline into your team now, proactively, or to rediscover it the hard way when a production incident forces your hand.

CluedoTech helps organisations design and implement practical AI strategies for engineering teams. If you are thinking about AI-augmented software delivery — done right — get in touch.